PI: Helen Armstrong.

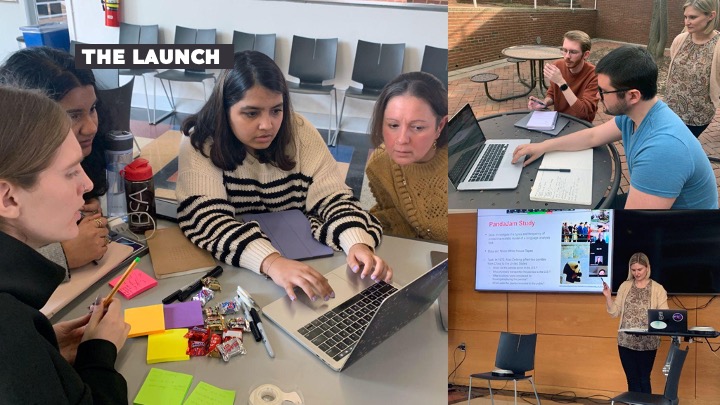

Master of Graphic & Experience Design (MGXD) students recently collaborated with the Laboratory of Analytic Sciences (LAS) here at NC State to explore the potential of machine learning to assist in voice language analysis tasks.

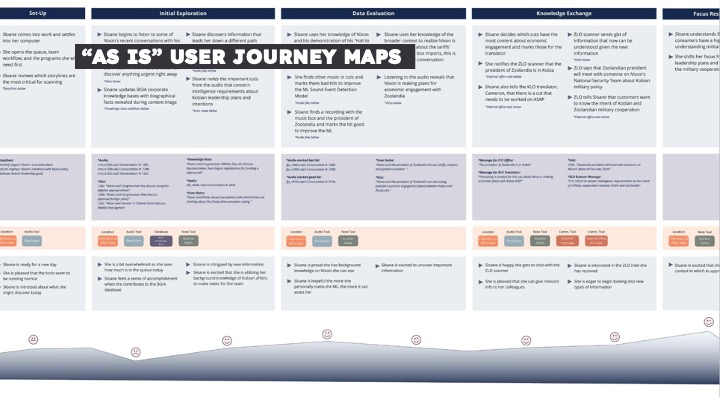

As AI/ML technologies becomes more prevalent in modern workflows, new conveniences emerge even as some cognitive burdens increase. The advent of automatic speech recognition and other AI/ML technologies, for example, have not only added more tasks to the language analysts’ workflow, but unleashed new possibilities for how to go about information retrieval and exploration. In this project MGXD students used human-centered research methods to prototype a unified environment for analysts that enables them to take full advantage of novel technologies while seamlessly pivoting between the various stages of their workflow.

Although our research team focused specifically on voice language analysis in the intelligence community for this project, there are many applications for this kind of interface across other domains: helping hearing impaired individuals understand the nuance of a conversation; facilitating better communication in a business or government policy meetings across cultures; helping academic researchers gather and analyze materials from a vast array of lectures and conversations; capturing and preserving the nuances of oral languages, etc. Machine learning is opening up powerful capabilities for engaging with human language in new ways to provide critical insight and enable action.

The core research questions of this project:

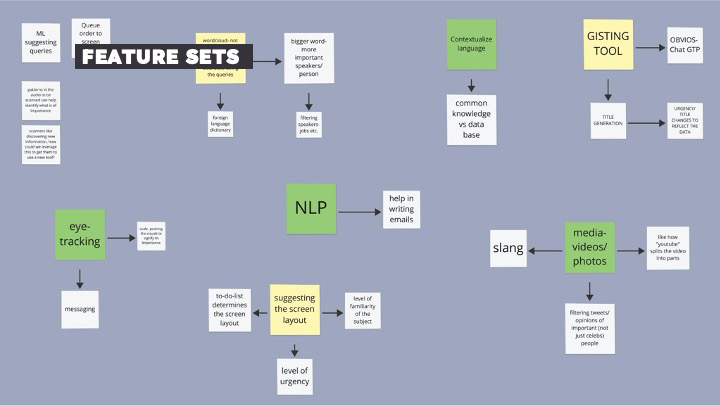

How might the design of an interface use the affordances of ML to enable voice language analysts to quickly produce reliable and robust intelligence that accurately conveys content, intent, and context?

Students were asked to:

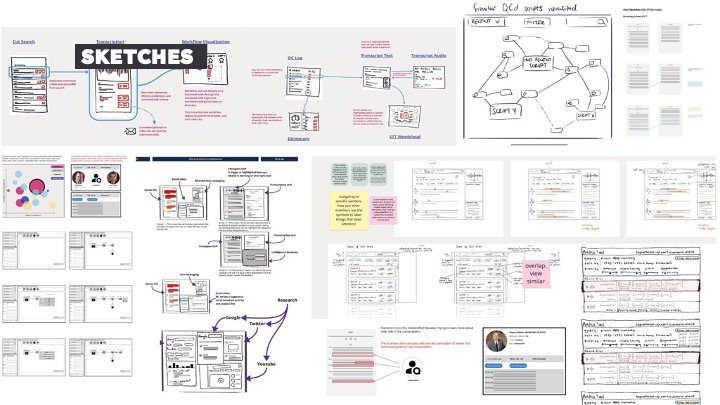

- Envision a unified interface environment

- Prototype new AI-powered ways of searching & finding

- Visualize patterns in the data

- Focus on language analyst needs/desires to create an enjoyable user experience

This material is based upon work done, in whole or in part, in coordination with the Department of Defense (DoD). Any opinions, findings, conclusions, or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the DoD and/or any agency or entity of the United States Government.

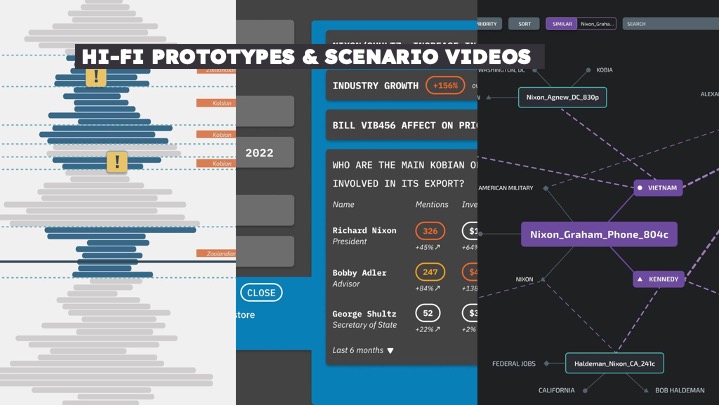

Final Prototypes

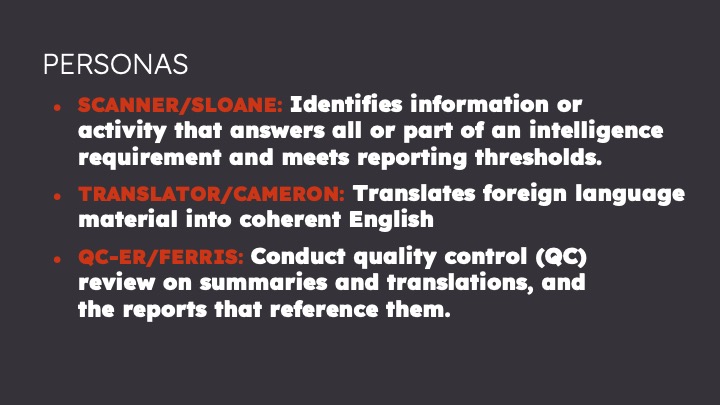

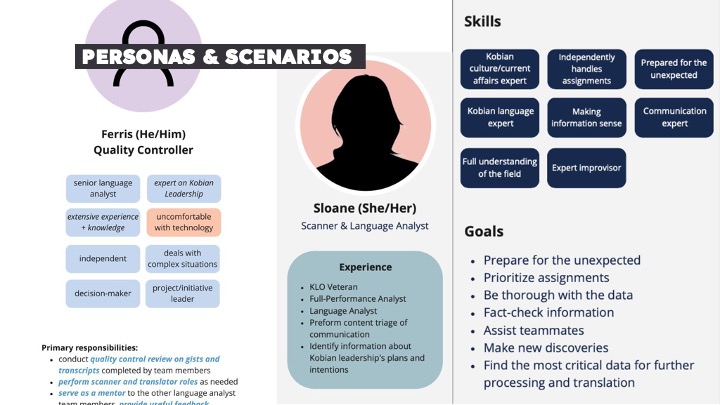

Scanner Persona. Designers: Diksha Bahirwani, Isha Parate, Kayla Rondinelli

Translator Persona. Designers: Ned Babbott, Kevin Ward

Quality Control Persona. Designers: Sasa Crkvenjas, Adam Noel

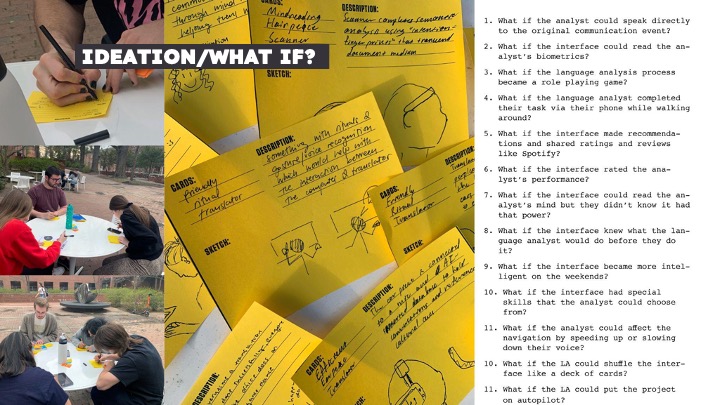

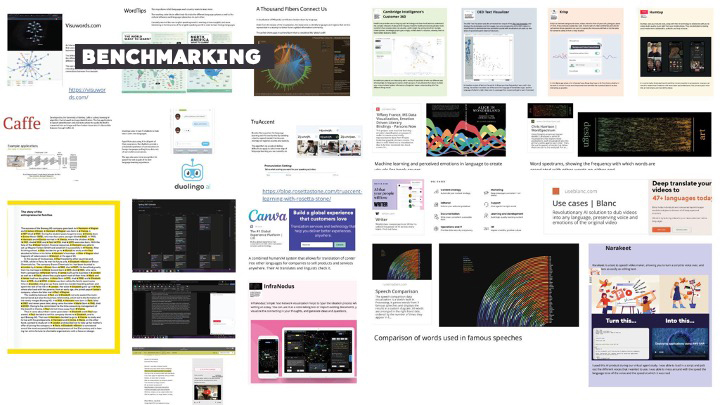

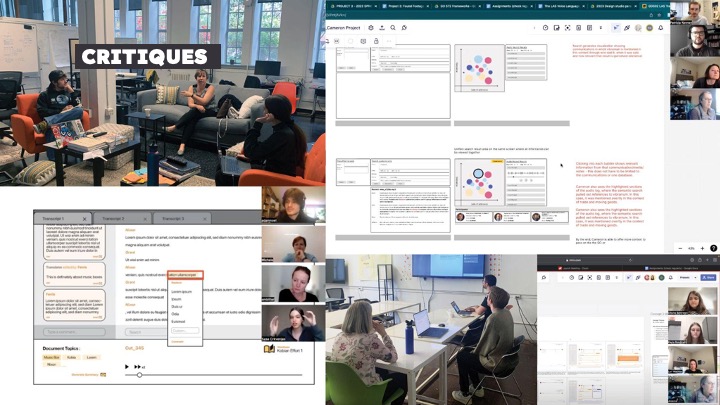

Human-Centered Design Process